A whisper. A few slurred words. For those who suffer from dysarthria, a motor speech disorder, basic communication is a challenge, indelibly affecting both their professional and personal life. But now a new innovation based on artificial intelligence (AI) and developed in India could be life-changing.

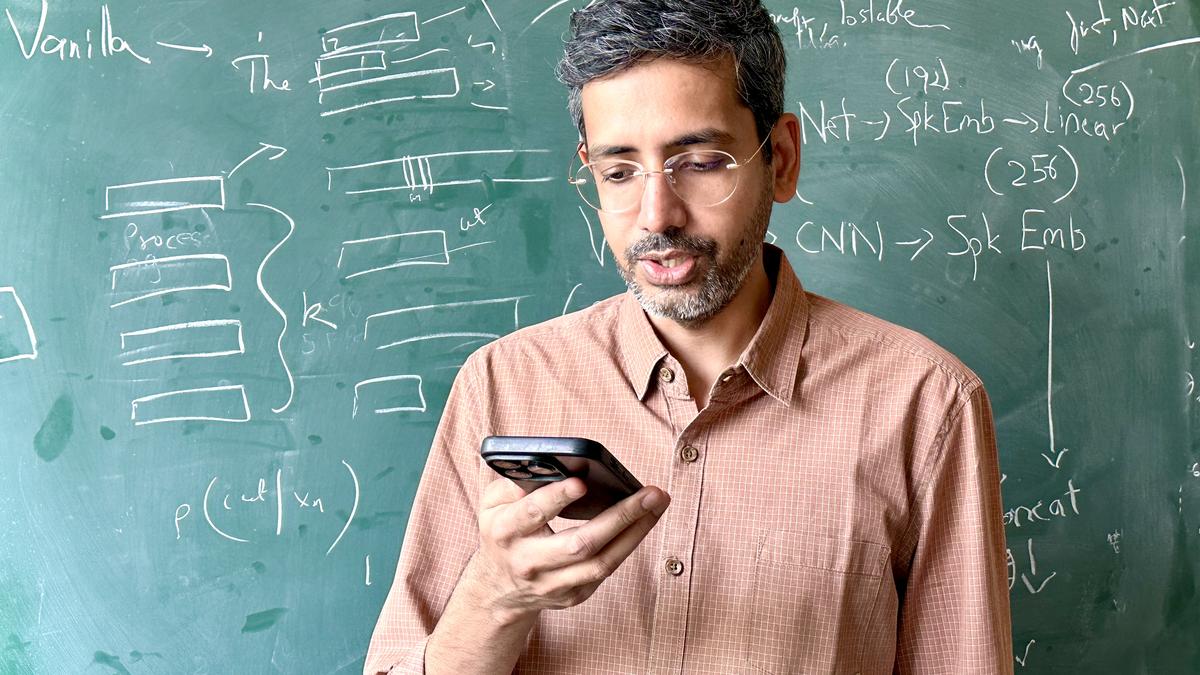

Led by associate professor Vineet Gandhi of the International Institute of Information Technology (IIIT), Hyderabad, a team has developed a simple app that can help people talk as an audio translation converts the speaker’s voice almost in real-time. The app can either convert slurred speech into clear, natural-sounding speech or use a camera to analyse lip movements and subtle throat vibrations to generate intelligible speech.

While the current project runs in English, the team’s next aim is to take these technologies to regional languages, including Hindi, Telugu, and Tamil, as many across the country do not have the means to benefit from accessibility-focused AI models. For this work, Mr. Gandhi won the Anusandhan National Research Foundation (ANRF) award in 2026.

Excerpts from an interview:

What inspired you to begin work on this humanitarian AI project?

My research has always been driven by a simple question: what real problem can technology help solve?

While my academic training is primarily in computer vision, about four years ago, I began to see exciting possibilities emerging in speech research and decided to explore the field more deeply. I became increasingly aware of the challenges faced by many individuals who lose their ability to speak due to medical conditions: the impact of this loss extends far beyond communication — it affects independence, identity, and connection.

Recognising this need inspired me to focus my work on accessibility-driven technologies designed to restore or enable speech, with the goal of helping people regain their voice.

Could you describe how the app works for people with speech impairment?

The app is designed to convert impaired or distorted speech into clear, natural-sounding speech with only a few hundred milliseconds of delay. A user simply speaks in their own voice, and the system processes it to produce intelligible speech for the listener.

We are also developing a complementary lip-to-speech capability, where a person can silently move their lips and the system generates the corresponding speech.

A key aspect we are focusing on is personalisation, where users can calibrate and refine the application to their voice by reading few minutes of text on the app.

We aim for these technologies to be integrated into common communication platforms, such as web-based calling applications, making everyday communication easier for people with speech impairments.

You also aim to expand this technology to regional Indian languages. How do you hope to achieve this?

At present, much of the global speech technology ecosystem is predominantly designed for English, and our initial experiments have naturally followed the same trajectory. However, a major goal of our research is to extend these capabilities to regional Indian languages, where accessible speech technologies are equally important.

To achieve this, we plan to collect speech data in Indian languages and develop data-efficient models suited for low-resource scenarios. Our approach includes data augmentation and efficient fine-tuning of pre-trained models.

We have already conducted preliminary experiments in Hindi with promising results, and with support from the Anusandhan National Research Foundation, we aim to further enhance and expand this work to additional Indian languages.

You believe that “accessibility and linguistic diversity” are crucial for AI research in India. Could you elaborate?

Accessibility and linguistic diversity are fundamental considerations for AI research in India. Having spent several years in Europe, I observed that accessibility is far more systematically integrated into public infrastructure and digital services there.

In contrast, India still has significant gaps, even in public spaces such as railway stations, where basic accessibility provisions are often limited. This highlights the broader need to design technologies that consciously include people with disabilities.

At the same time, India’s linguistic diversity presents another important dimension. In many parts of the country, particularly in rural regions, speech remains the most natural and primary mode of interaction. Text-heavy or typing-based interfaces may not always be practical or inclusive in such contexts. Therefore, AI systems designed for India must prioritise speech-based interaction and support multiple regional languages.

Taken together, meaningful accessibility and strong support for linguistic diversity are essential if digital technologies are to be truly inclusive and widely usable across the country.

WHO has said the “future of healthcare is digital”…

The World Health Organization has emphasised that the future of healthcare will be increasingly digital. In a country like India, telemedicine can play a transformative role, particularly when supported by basic diagnostic infrastructure at the local level, which enables more accurate remote consultations.

Another important direction is AI-assisted diagnostics, where machine learning systems analyse medical images, speech, or health records to support early disease detection and prediction.

Practical solutions are already emerging. For example, ‘Shishu Maapan’ developed by Wadhwani AI helps measure newborn weight and size simply from mobile photos and is being adopted by frontline health workers such as ASHA workers.

Digital tools are also enabling assistive healthcare technologies, including speech restoration systems for individuals who have lost their ability to speak, and wearable devices that continuously monitor health parameters and alert doctors to potential anomalies. These developments illustrate how digital innovation can make healthcare more accessible and scalable.

A common criticism of AI-generated speech is that while it’s intelligible, it often fails to capture the unique cadence of the speaker. When restoring a voice to someone with dysarthria, how do you balance the need for clear communication with the need to preserve the user’s individual human essence?

This is an important concern. If recordings of the speaker’s original voice from before the onset of dysarthria are available, modern voice cloning techniques can recreate that voice with as little as 10 seconds of speech. So preserving an individual’s vocal identity is technically feasible today, and there is substantial research demonstrating this capability. Our current app, however, focuses primarily on restoring content intelligibility, ensuring that what the user intends to say is conveyed clearly. For now, the generated speech uses a common voice rather than a personalised one.

That said, text-to-speech systems are becoming increasingly natural, to the point that they are now being integrated into conversational bots replacing many traditional customer service applications. Emotional nuance remains more challenging, as we discussed in our earlier work on empathic speech generation , but progress is rapid.

How does the model differentiate between impaired speech and a noisy background as the user navigates, say, a busy Indian street?

This is indeed a significant challenge in India, where real-world environments can be extremely chaotic. Anyone who has thought about deploying self-driving cars here quickly realizes how unpredictable our roads can be: traffic patterns, honking, pedestrians, and vehicles all interacting in highly dynamic ways. Speech technology faces a similar level of complexity.

In our experiments, we improve robustness using noise augmentation, where we simulate different noisy environments during training so the model learns to handle background sounds. Ultimately, the most effective solution is to collect and train on more real-world data from noisy settings. Even then, some performance degradation is inevitable because separating impaired speech from heavy background noise is fundamentally a difficult problem.

divya.gandhi@thehindu.co.in